Markov Decision Processes (MDPs) are fundamental frameworks in the fields of decision theory, artificial intelligence, and operations research. They provide a structured way to model decision-making scenarios where outcomes are partly random and partly under the control of a decision-maker. By capturing the dynamics of complex systems and the uncertainty inherent in real-world environments, MDPs enable researchers and practitioners to develop strategies that optimize long-term rewards. This comprehensive overview aims to elucidate the core concepts, mathematical underpinnings, and practical applications of MDPs, highlighting their significance in advancing autonomous decision-making systems and strategic planning.

Introduction to Markov Decision Processes and Their Significance

Markov Decision Processes are mathematical models designed to formalize sequential decision-making in stochastic environments. They extend the classical Markov chain framework by incorporating actions and rewards, enabling the modeling of systems where decisions influence future states and outcomes. The significance of MDPs lies in their ability to provide optimal policies—sets of actions that maximize cumulative rewards over time—making them invaluable in fields such as robotics, finance, healthcare, and logistics. As systems grow increasingly complex, the need for robust decision-making models that can adapt to uncertainty has made MDPs a central tool in the development of intelligent algorithms.

The importance of MDPs also stems from their versatility and broad applicability. They serve as foundational models for reinforcement learning, a branch of machine learning focused on teaching agents to make decisions through trial and error. In robotics, MDPs help in planning navigation and manipulation tasks under uncertainty. In economics and finance, they model investment strategies and market behavior. Their ability to formalize the trade-offs between exploration and exploitation, risk and reward, makes them essential for designing autonomous systems capable of operating effectively in unpredictable environments.

Furthermore, MDPs facilitate the development of algorithms that can learn optimal policies from data, even when the system dynamics are not fully known. This adaptive capability is crucial in real-world scenarios where explicit modeling of every detail is impractical. The theoretical foundations of MDPs also provide guarantees about the optimality and stability of the solutions derived, fostering confidence in their application to critical decision-making tasks. Overall, MDPs have become a cornerstone in the pursuit of intelligent systems capable of autonomous and optimized decision-making.

Key Components and Mathematical Foundations of MDPs

At the core of an MDP are several key components: a set of states, a set of actions, transition probabilities, rewards, and a discount factor. The state space encompasses all possible situations the system can be in, while actions represent the choices available to the decision-maker at each state. Transition probabilities quantify the likelihood of moving from one state to another after executing a specific action, reflecting the stochastic nature of the environment. Rewards are numerical values received after transitions, providing a measure of the immediate benefit of each action. The discount factor determines the importance of future rewards relative to immediate gains, shaping the agent’s long-term strategy.

Mathematically, an MDP is often defined as a tuple ((S, A, P, R, gamma)), where (S) is the set of states, (A) is the set of actions, (P(s’|s,a)) is the transition probability from state (s) to state (s‘) given action (a), (R(s,a)) is the reward received after taking action (a) in state (s), and (gamma) is the discount factor between 0 and 1. The goal is to find a policy—a mapping from states to actions—that maximizes the expected sum of discounted rewards over time. This is achieved through various solution methods that rely on dynamic programming principles, such as Bellman equations, which recursively relate the value of states to the values of successor states.

The mathematical foundation of MDPs hinges on the concept of the value function, which estimates the expected return starting from a particular state and following a specific policy. The optimal value function, often denoted as (V^*(s)), satisfies the Bellman optimality equation, which provides a recursive means to compute the maximum expected reward. Policy iteration and value iteration are two fundamental algorithms that leverage these equations to converge on the optimal policy. These methods iteratively evaluate and improve policies until the best possible strategy for decision-making is identified, ensuring the solution aligns with the goal of reward maximization.

Understanding the mathematical structure of MDPs allows for rigorous analysis and efficient computation of optimal policies. It also provides a foundation for extending the models to more complex scenarios, such as partially observable environments or continuous state spaces. The clarity of the mathematical framework ensures that solutions are not only theoretically sound but also practically implementable, enabling the deployment of decision-making agents across various domains with confidence in their performance.

How States, Actions, and Rewards Interact in MDPs

In an MDP, the interaction between states, actions, and rewards forms the core of the decision-making process. When an agent is in a particular state, it selects an action based on its current policy. This action influences the environment, leading to a transition to a new state according to probabilistic rules defined by the transition function. Simultaneously, the agent receives a reward that quantifies the immediate benefit or cost associated with that transition. The interplay of these elements determines how the agent learns and adapts its strategy to maximize cumulative rewards over time.

The dynamics of state transitions are governed by transition probabilities (P(s’|s,a)), which specify the likelihood of moving from state (s) to state (s‘) after taking action (a). These probabilities capture the inherent uncertainty in the environment and are crucial for planning and policy optimization. The reward function (R(s,a)) provides immediate feedback, guiding the agent toward more rewarding actions. The combination of probabilistic transitions and reward signals creates a complex landscape where the agent must consider not only immediate gains but also the long-term consequences of its actions.

This interaction is often conceptualized as a sequential decision-making process, where each choice influences future states and rewards, creating a chain of dependencies. Effective decision-making involves balancing exploration—trying new actions to discover potentially better rewards—and exploitation—leveraging known strategies that yield high returns. The agent’s goal is to develop a policy that optimally navigates this landscape, considering the stochastic nature of transitions and the cumulative effect of rewards. This balance is central to reinforcement learning algorithms that seek to improve policies through interaction with the environment.

Ultimately, the interaction between states, actions, and rewards in an MDP encapsulates the essence of strategic decision-making under uncertainty. By understanding how these elements influence each other, researchers can design algorithms that learn optimal or near-optimal policies. This understanding enables autonomous systems to operate effectively in dynamic, unpredictable environments, making decisions that are both rational and contextually appropriate over time.

The Role of Transition Probabilities in Decision-Making

Transition probabilities are a fundamental component of MDPs that encapsulate the uncertainty inherent in dynamic environments. They specify the likelihood of moving from one state to another after executing a particular action, effectively modeling the stochastic nature of real-world systems. These probabilities influence the strategic planning process, as they determine the expected outcomes of different actions and shape the long-term value associated with each decision. Accurate modeling of transition probabilities is essential for developing reliable policies that perform well under uncertainty.

In decision-making, transition probabilities serve as the probabilistic backbone that guides the estimation of future states and rewards. They allow the agent to evaluate the expected consequences of actions, considering all possible outcomes weighted by their likelihoods. This probabilistic foresight is crucial in environments where outcomes are not deterministic, enabling the agent to make informed choices that maximize expected rewards over time. The transition model influences the computation of value functions and policy updates, directly impacting the quality and robustness of the resulting strategies.

The importance of transition probabilities extends to their role in learning algorithms. In many practical scenarios, these probabilities are not known beforehand and must be estimated from data through methods such as model-based reinforcement learning. Accurate estimation of transition dynamics allows for better planning and policy optimization. Conversely, inaccuracies in these probabilities can lead to suboptimal decisions, highlighting the need for robust learning techniques that can handle model uncertainty and adaptively refine transition estimates as more data becomes available.

In summary, transition probabilities are vital to the functioning of MDPs, providing the probabilistic framework needed for anticipatory decision-making. They enable the modeling of complex, uncertain environments and underpin the algorithms that derive optimal policies. Understanding and accurately estimating transition dynamics are critical steps in deploying MDP-based solutions in real-world applications, where uncertainty is a defining feature of the operational landscape.

Techniques for Solving and Optimizing MDPs

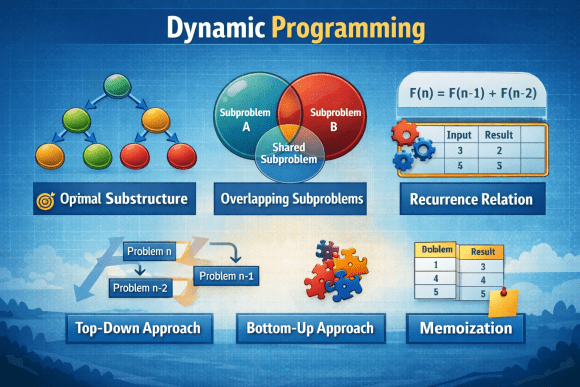

Solving an MDP involves finding the optimal policy that maximizes the expected cumulative reward. Several classical techniques have been developed for this purpose, with dynamic programming methods such as value iteration and policy iteration being among the most prominent. Value iteration repeatedly updates the value function based on the Bellman optimality equation until convergence, resulting in the derivation of an optimal policy. Policy iteration alternates between policy evaluation—computing the value of a given policy—and policy improvement—updating the policy based on current value estimates—until the optimal strategy is identified. Both methods guarantee convergence under certain conditions and are fundamental to solving finite MDPs.

Another approach to solving MDPs involves linear programming, where the problem is formulated as an optimization task with linear constraints derived from the Bellman equations. This technique is particularly useful in large-scale problems or when the model includes additional constraints. Approximate dynamic programming and reinforcement learning algorithms, such as Q-learning and deep Q-networks, enable solving MDPs when the model dynamics are unknown or too complex for exact solutions. These methods rely on sampling and iterative updates, allowing agents to learn optimal policies through interaction with the environment without explicit knowledge of transition probabilities.

Recent advancements have focused on scalable and efficient algorithms capable of handling high-dimensional state and action spaces. Techniques such as function approximation, deep learning, and hierarchical reinforcement learning have expanded the applicability of MDP